The Welsh Speech Dataset is a comprehensive collection of high-quality audio recordings from native Welsh speakers across Wales and Patagonia, Argentina. This professionally curated dataset contains 175 hours of authentic Welsh speech data meticulously annotated for machine learning applications.

Welsh, a Celtic language with official status in Wales spoken by over 500,000 people, is captured with distinctive phonological features essential for developing accurate speech recognition systems supporting Celtic language revitalization and cultural preservation.

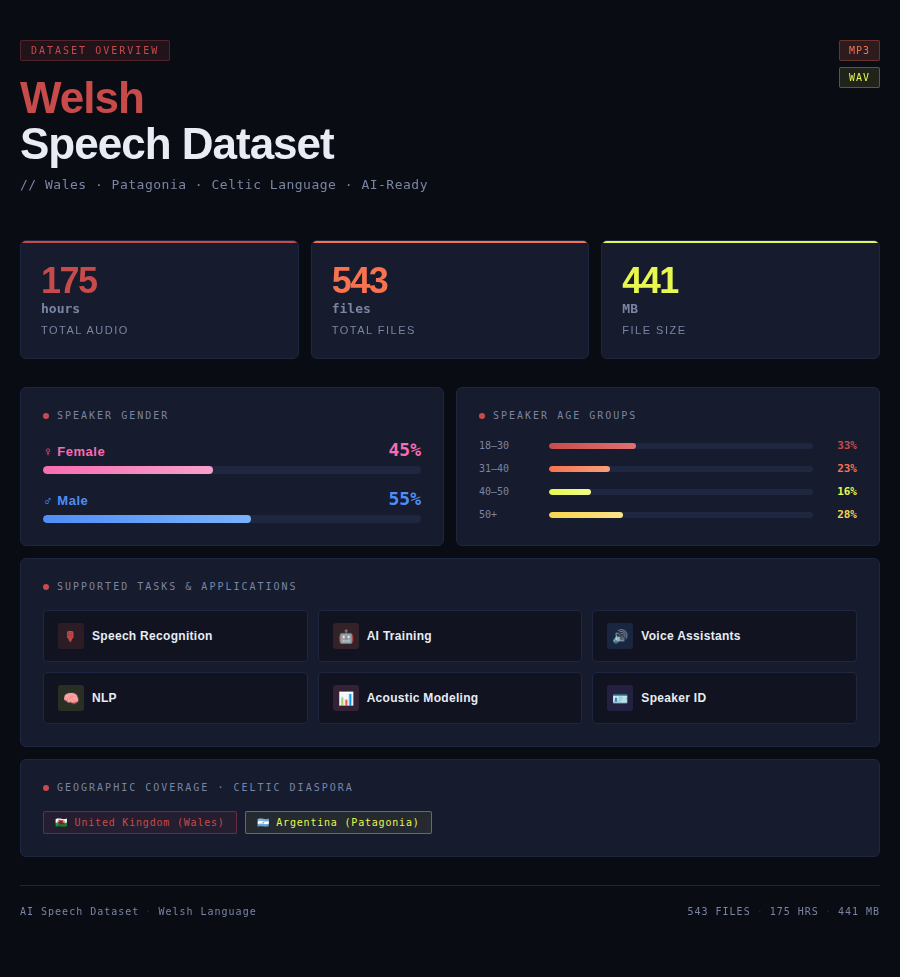

Dataset General Info

| Parameter | Details |

|---|---|

| Size | 175 hours |

| Format | MP3/WAV |

| Tasks | Speech recognition, AI training, voice assistant development, natural language processing, acoustic modeling, speaker identification |

| File size | 441 MB |

| Number of files | 543 files |

| Gender of speakers | Female: 45%, Male: 55% |

| Age of speakers | 18-30 years: 33%, 31-40 years: 23%, 40-50 years: 16%, 50+ years: 28% |

| Countries | United Kingdom (Wales), Argentina (Patagonia) |

Use Cases

Language Revitalization and Education

Welsh educational institutions can utilize the Welsh Speech Dataset to develop language learning applications, immersion education tools, and literacy platforms. Voice technology supports Welsh language revitalization efforts, enables interactive learning experiences, facilitates pronunciation practice for learners, and strengthens Welsh language transmission across generations. Applications include Welsh medium school resources, adult learning platforms, children’s educational games, and pronunciation training tools supporting Wales’ bilingual education policy.

Government Services and Official Language Status

Welsh government agencies can leverage this dataset to build bilingual voice-enabled services, public information systems, and citizen engagement platforms. Voice technology implements Welsh official language status practically, ensures government services are accessible in Welsh, supports linguistic equality in public sector, and facilitates citizen interaction with government in preferred language. Applications include council services, health service information, transportation systems, and emergency services in Welsh language.

Cultural Heritage and Patagonian Connection

Cultural organizations can employ this dataset to develop heritage preservation platforms, Patagonian Welsh community connections, and cultural tourism applications. Voice technology preserves unique Welsh linguistic heritage, maintains connections between Wales and Patagonian Welsh communities, supports cultural tourism showcasing Welsh traditions, and documents oral histories. Applications include virtual heritage tours, genealogy platforms connecting Welsh communities globally, and cultural experience applications.

FAQ

Q: What is included in the Welsh Speech Dataset?

A: The dataset includes 175 hours of audio from Welsh speakers in Wales and Patagonia. Contains 543 files in MP3/WAV format totaling 441 MB with linguistic annotations.

Q: Why is Welsh language technology important?

A: Welsh has official status in Wales and is undergoing revitalization. Speech technology supports Welsh language policy, enables bilingual services, facilitates language learning, and strengthens Welsh linguistic vitality for over 500,000 speakers.

Q: How does this support language revitalization?

A: Technology makes Welsh accessible to learners, supports immersion education, provides interactive learning tools, and positions Welsh as modern language relevant to digital age, crucial for successful language revitalization efforts in Wales.

Q: How diverse is the speaker demographic?

A: Dataset features 45% female and 55% male speakers with ages: 33% (18-30), 23% (31-40), 16% (40-50), 28% (50+).

Q: What applications benefit from Welsh technology?

A: Applications include language learning tools, bilingual government services, educational resources for Welsh medium schools, cultural heritage platforms, and services connecting Welsh communities in Wales and Patagonia.

How to Use the Speech Dataset

Step 1: Dataset Acquisition

Download the dataset package from the provided link. Upon purchase, you will receive access credentials and download instructions via email. The dataset is delivered as a compressed archive file containing all audio files, transcriptions, and metadata. Ensure you have sufficient storage space for the complete dataset before beginning the download process. The package includes comprehensive documentation, sample code, and integration guides to help you get started quickly.

Step 2: Extract and Organize

Extract the downloaded archive to your local storage or cloud environment using standard decompression tools. The dataset follows a structured folder organization with separate directories for audio files, transcriptions, metadata, and documentation. Review the README file for detailed information about file structure, naming conventions, and data organization. Familiarize yourself with the metadata files which contain speaker demographics, recording conditions, and quality metrics essential for effective data utilization.

Step 3: Environment Setup

Install required dependencies for your chosen ML framework such as TensorFlow, PyTorch, Kaldi, or others according to your project requirements. Ensure you have necessary audio processing libraries installed including librosa for audio analysis, soundfile for file I/O, pydub for audio manipulation, and scipy for signal processing. Set up your Python environment with the provided requirements.txt file for seamless integration. Configure GPU support if available to accelerate training processes. Verify all installations by running the provided test scripts.

Step 4: Data Preprocessing

Load the audio files using the provided sample scripts which demonstrate best practices for data handling. Apply necessary preprocessing steps such as resampling to consistent sample rates, normalization to standard amplitude ranges, and feature extraction including MFCCs (Mel-frequency cepstral coefficients), spectrograms, or mel-frequency features depending on your model architecture. Use the included metadata to filter and organize data based on speaker demographics, recording quality scores, or other criteria relevant to your specific application. Consider data augmentation techniques such as time stretching, pitch shifting, or adding background noise to improve model robustness.

Step 5: Model Training

Split the dataset into training, validation, and test sets using the provided speaker-independent split recommendations to avoid data leakage and ensure proper model evaluation. Typical splits are 70-15-15 or 80-10-10 depending on dataset size. Configure your model architecture for the specific task whether speech recognition, speaker identification, emotion detection, or other applications. Select appropriate hyperparameters including learning rate, batch size, and number of epochs. Train your model using the transcriptions and audio pairs, monitoring performance metrics on the validation set. Implement early stopping to prevent overfitting. Use learning rate scheduling and regularization techniques as needed. Save model checkpoints regularly during training.

Step 6: Evaluation and Fine-tuning

Evaluate model performance on the held-out test set using standard metrics such as Word Error Rate (WER) for speech recognition, accuracy for classification tasks, or F1 scores for more nuanced evaluations. Analyze errors systematically by examining confusion matrices, identifying problematic phonemes or words, and understanding failure patterns. Iterate on model architecture, hyperparameters, or preprocessing steps based on evaluation results. Use the diverse speaker demographics in the dataset to assess model fairness and performance across different demographic groups including age, gender, and regional variations. Conduct ablation studies to understand which components contribute most to performance. Fine-tune on specific subsets if targeting particular use cases.

Step 7: Deployment

Once satisfactory performance is achieved, export your trained model to appropriate format for deployment such as ONNX, TensorFlow Lite, or PyTorch Mobile depending on target platform. Optimize model for inference through techniques like quantization, pruning, or knowledge distillation to reduce size and improve speed. Integrate the model into your application or service infrastructure whether cloud-based API, edge device, or mobile application. Implement proper error handling, logging, and monitoring systems. Set up A/B testing framework to compare model versions. Continue monitoring real-world performance through user feedback and automated metrics. Use the dataset for ongoing model updates, periodic retraining, and improvements as you gather production data and identify areas for enhancement. Establish MLOps practices for continuous model improvement and deployment.

For detailed code examples, integration guides, API documentation, troubleshooting tips, and best practices, refer to the comprehensive documentation included with the dataset. Technical support is available to assist with implementation questions and optimization strategies.